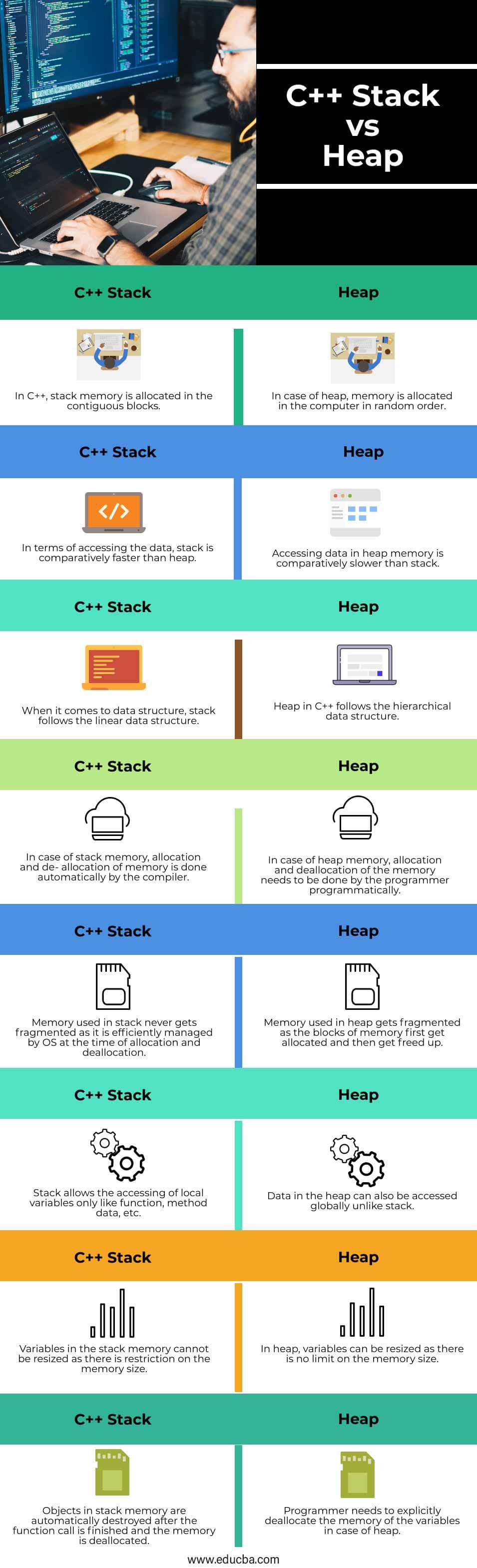

In programming languages such as C and C++, which require manual memory management, the allocation of objects or structures to the stack or heap is at the discretion of the engineer, and this poses a challenge for the engineer’s work. Threads and processes are both contexts for code execution, but if an application contains hundreds or thousands of execution contexts and each context is a thread, it can take up a lot of memory space and incur other additional overhead. If the program needs to run hundreds or even thousands of threads at the same time, most of these threads will only use a small amount of stack space, and when the function call stack is very deep, the fixed stack size will not meet the needs of the user program. However, this fixed stack size is not the right value in some scenarios. Most architectures have a default stack size of about 2-4 MB, and very few architectures use a 32 MB stack on which user programs can store function parameters and local variables. If we execute the pthread_create system call in the Linux OS, the process starts a new thread and if the user does not specify the size of the thread stack through the soft resource limit RLIMIT_STACK, the OS chooses a different default stack size depending on the architecture. The stack memory is very closely related to the function calls, and we have introduced the stack area in the section on function calls, and the memory between BP and SP is the current function call stack.įor historical reasons, stack memory is expanded from high addresses to low addresses, and applications only need to modify the SP register value when requesting or releasing stack memory. The Go language assembly code contains two stack registers, BP and SP, which store the base address pointer of the stack and the address of the top of the stack, respectively. The stack register is one of the CPU registers, and its main role is to keep track of the function call stack. Registers are very limited on physical machines, yet operations in the stack area will use more than two registers, which is a good indication of the importance of the presence of applications within the stack. They have very limited storage capacity, but provide the fastest read and write speeds, and taking full advantage of register speed can build high-performance applications. Registers are a scarce resource in the central processing unit (CPU). This linear memory allocation strategy has a very high efficiency, but the engineer also often has no control over the allocation of the stack memory, and this part of the work is basically done by the compiler. These parameters are created with the creation of the function and die out when the function returns, and generally do not exist in the program for a long time.

The memory in the stack area is generally allocated and released automatically by the compiler, which stores the function entry parameters and local variables.

We have analyzed the process of requesting and releasing heap memory in detail in the last two sections, and this section will introduce the management of Go language stack memory. This memory is allocated by the memory allocator and reclaimed by the garbage collector. The memory of an application is generally divided into heap and stack areas, and the program can actively request memory space from the heap area during runtime.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed